robot (bot)

The term robots is used equally for program-controlled machines that can position and assemble components or parts without human intervention, but also for programs that operate as agents for a user or server. On the Internet, these bots are also known as spiders or crawlers.

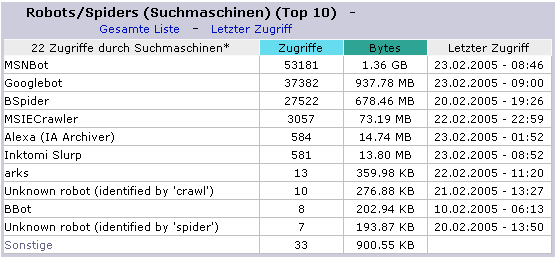

Robots are used as search engines that automatically search the Internet for new and updated web pages by following links on their own. They are sent out by search engines for research and crawl the web servers. These search engine bots - at Google they are the Googlebots - capture, characterize and index the documents based on keywords and feed the data of the websites to the search engines. Depending on how they work, the robots or crawlers can capture only new or updated web pages - they are then called freshbots - or perform deep indexing and transmit all subpages of a website. These bots are called deepbots. The technique of crawlers is called crawling.

Robots can be accepted, restricted to specific pages or prevented with the Robot Exclusion Standard( RES). Their behavior can be influenced by header entries and the ASCII file robots.txt in the root directory. Robots can be selected by simple commands and information transfer can be restricted by not allowing certain files to be crawled. They are excluded from the acquisition by "Disallow: /Images/".

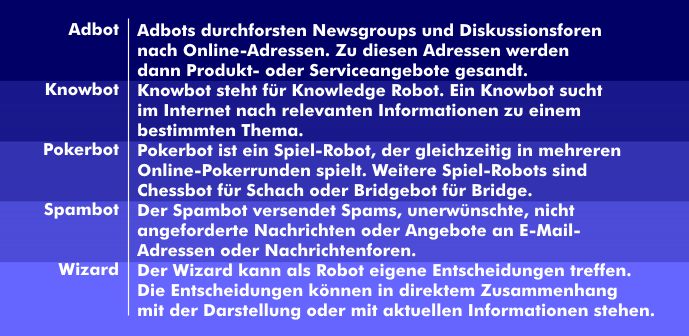

Robots are modified, unofficial IRC client programs that perform control tasks and alert chatters to certain news. There are versions that make it possible to break into the computer of an ordinary IRC client. Other bots can automatically run games, like poker bots, send unsolicited and unsolicited emails, like spambots, browse newsgroups, like adbots, work speech-based to take over voice communication between humans and technology, like chatbots, or make their own decisions, like wizards. In social networks, they are referred to as social bots or social robots.

Cybercriminals use bots to infect many computers of unknown people, forming a botnet through which they can carry out attacks on computers and networks. These can be DoS or DDoS attacks or the spreading of spam, viruses and Trojans.