picture sensor

Image sensors are light-sensitive sensors that convert brightness into voltage. They form the central electronic component of digital cameras and camcorders and take the place of negative film in analog cameras. Image sensors are areal sensors consisting of many tiny photosensitive photocells arranged in rows and columns in a matrix fashion. The recording elements can be photodiodes or phototransistors, which convert the light falling on them into voltage by means of the photoelectric effect.

Technological differences of image sensors

Image sensors differ in terms of their technology, size and number of pixels. The most commonly used basic technologies are Charge Coupled Device( CCD) and Complementary Metal Oxide Semiconductor( CMOS) for CMOS sensors. There are some further developments of these technologies, which are characterized by improved characteristic values. These include the Foveon X3 sensor, the Contact Image Sensor( CIS), the DigitalPixel Sensor( DPS), the Intensified Charge Coupled Device (ICCD), the Super CCD( SCCD) and the Backside Illuminated Sensor( BSI).

With this image sensor, the light-sensitive layer is above the metallization plane, which means that the incident light is not attenuated by the metallization. Using a different graphene-based technology is the graphene sensor. These are electrically conductive carbon compounds that are light sensitive and can absorb photons and emit electrons.

The photocell size or pixel size and the number of photocells must be seen in connection with the sensor or chip size. All three parameters are related to each other and determine the image resolution, the light sensitivity, the dynamic range and the image noise.

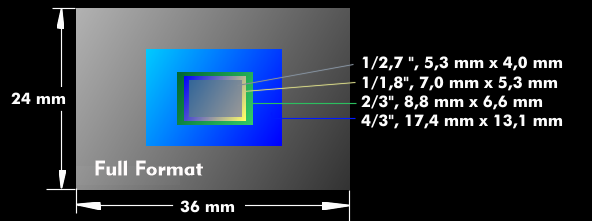

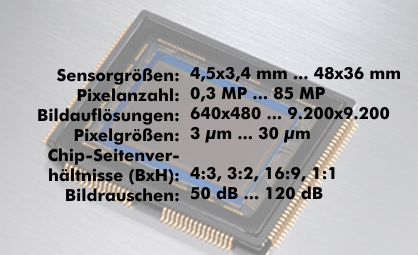

The actual sensor size refers exclusively to the sensor chip. There are fixed sensor sizes, ranging from 4.5 x 3.0 mm to 48 x 36 mm. The smaller sizes have inch specifications, such as 1/3.2", 1/2.7", 1/2.5", 1/1.8", 1/1.7" and 2/3". If you look at the millimeter specifications, you will not come up with the corresponding values. For example, the sensor size 2/3" is 8.8 x 6.6 mm. There are historical reasons for this, which lie in the usable area of the earlier image recording tubes, the Vidikons. For these pickup tubes, the diameter of the glass bulb was given in inches. The effective size of the light-sensitive recording area within the glass bulb was much smaller. The diagonal of the recording area was only 16.8 mm, with an aspect ratio of 4:3, and this value forms the basis for the size of image sensors. The larger sensor formats are called FourThirds, which corresponds to a chip size of 22.5 mm diagonal, APS format(APS-C) with a size of 23 mm x 15mm, and the 35 mm format, which is 36 x 24 mm, as in classic 35 mm cameras. This format is also referred to as full- frame.

Other characteristic values of image sensors

As for the pixel number, it depends on the chip size and the pixel size. The pixel number itself, which is a measure of the image resolution, is given in megapixels( MP). The specification is the actual number of pixels that the camera can display, not the number of photocells. It ranges from 0.3 MP to 10 MP for image sensors, and in professional digital cameras it is a multiple of 10 MP. From the number of pixels, one can determine the image resolution via the aspect ratio. Image sensors have aspect ratios of 4:3, but also 3:2, 16:9 or 1:1. 1 megapixel and an aspect ratio of 4:3 result in an image resolution of 1,152 pixels horizontally and 864 vertically; 10 MP has 3,650 x 2,750 pixels.

As far as pixel size or pixel pitch is concerned, in practice these range from 1.5 µm to about 30 µm. The larger a pixel is, the more light it can absorb and the greater its light sensitivity. On the other hand, larger pixels reduce image resolution but also image noise. It is therefore a question of finding a sensible compromise between these three sizes for digital cameras.

Another characteristic value of image sensors is the sensor sensitivity. As with classic analog film, the sensor sensitivity is specified in ISO values, which correspond to the ASA values. The basic sensitivities of image sensors are between 50 ASA and 200 ASA.

Since the actual semiconductor sensors can only distinguish brightness values but not color tones, the brightness signals must be broken down into the primary colors by means of color filters before they reach the actual semiconductor sensors. For this reason, many consumer cameras have Bayer filters or other color filters integrated directly on the semiconductor sensors, as in the EXR sensor. Other techniques, such as the Foveon X3 sensor, use a multilayer filter concept.