natural user interface (NUI)

A Natural User Interface (NUI) is a natural user interface. It works interactively and intuitively and responds to touch, movement and speech. The Natural User Interface is a further development of the graphical user interface( GUI) with multi-touch screen and sensors for the voice-controlled user interface(VUI).

By touching the multi- touchscreen with a finger and moving the fingers across the touchscreen, it detects gestures, which it converts into control commands. Gesture recognition is one control criterion for the Natural User Interface, others are speech recognition, face recognition, facial expression recognition and object recognition. Motion interpretation is already used in smartphones and tablets with multi-touch screens. Since 2011, so has voice control, which can be used to control the iPhone 4S.

Motion detection is based on the interpretation of simple finger movements. If you move a finger across the screen, this is converted into a scroll command, when zooming, two fingers spread across the display and when entering text, the displayed letters are called up by touch. Gesture recognition is also used in automotive technology, for example in the attention assistant. This recognizes signs of driver fatigue and generates an acoustic, visual or haptic warning signal.

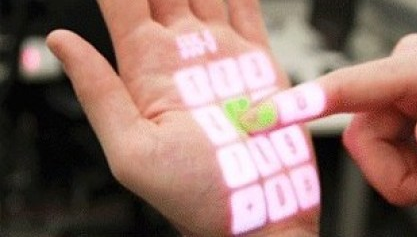

Microsoft has further developed the Natural User Interface and implemented it in the product OmniTouch. This development is interesting in that the touchscreen can be projected onto any object or surface and operated there. For example, the screen can be projected onto a wall or table and operated there as a touchscreen. The surface of the object thus becomes a touch surface.