human machine interface (HMI)

The man-machine interface( MMS), the human machine interface ( HMI) or man machine interface( MMI), is the user interface. It is found wherever a menu is shown on a display and a dialog between man and machine takes place via this menu, resulting in interaction.

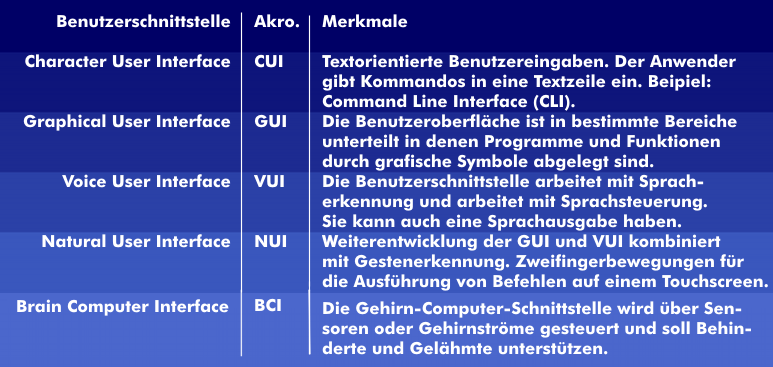

The man-machine interface determines the way in which man and machine communicate directly with each other, how man communicates his instructions to the machine, and in what form the machine executes the instructions and outputs the results. In the early years of computer technology, user input was text-oriented. The operator had to type in special commands that were interpreted by the operating system. The interface was called a character user interface( CUI).

From Character User Interface to Human Machine Interface

Since in the early years the input was very complex and not intuitive, the visualized interface was developed, where the input is done manually via computer mouse and keyboard, also via touchscreens and multi-touchscreens. These are graphical user interfaces, Graphical User Interfaces( GUI). Later came the voice-controlled user interfaces, the Voice User Interfaces(VUI), the Voice Browsers and the gesture-controlled, the Natural User Interfaces( NUI). The user interface depends on the HMI communication for which it is optimized.

The multifaceted nature of the HMI interface can be seen in the examples of computers, cell phones, televisions, Braille terminals or numerical controls, whose user interfaces are completely different.

In addition to visual HMI interfaces, there are also acoustic ones, which are becoming increasingly important. Well known are the single- channel acoustic input and output of speech via headset. In addition, there are also multi-channel procedures in which the user is not in contact with the equipment and can also move freely. These methods require multi-channel recording and playback techniques as well as intelligent signal processing.

To ensure accessibility for the disabled, there are user interfaces with voice control, gesture control and also with thought control: Brain Computer Interfaces( BCI).

Future technology with haptic interaction

Current HMI technologies work with visual interaction: the operator activates an input and sees the execution on the screen as interactive feedback. Even with touchscreens with which the operator is in direct contact, only the execution of the function is shown on the display.

The interaction is different when mechanical components are actuated. If a pushbutton or button is operated, the immediate interaction takes place via the fingertips in the form of a counterpressure. Touchscreens with haptic simulation are supposed to take over this interaction. With this technology, the screen surface moves. When the user presses a function key, the screen moves vertically for a short time, which is perceived by the fingertip. This simulates the feeling of a real keystroke. The vertical movement of the user interface can be realized by various methods: With electromagnetic actuators, electrostatic fields or by means of a piezo effect.